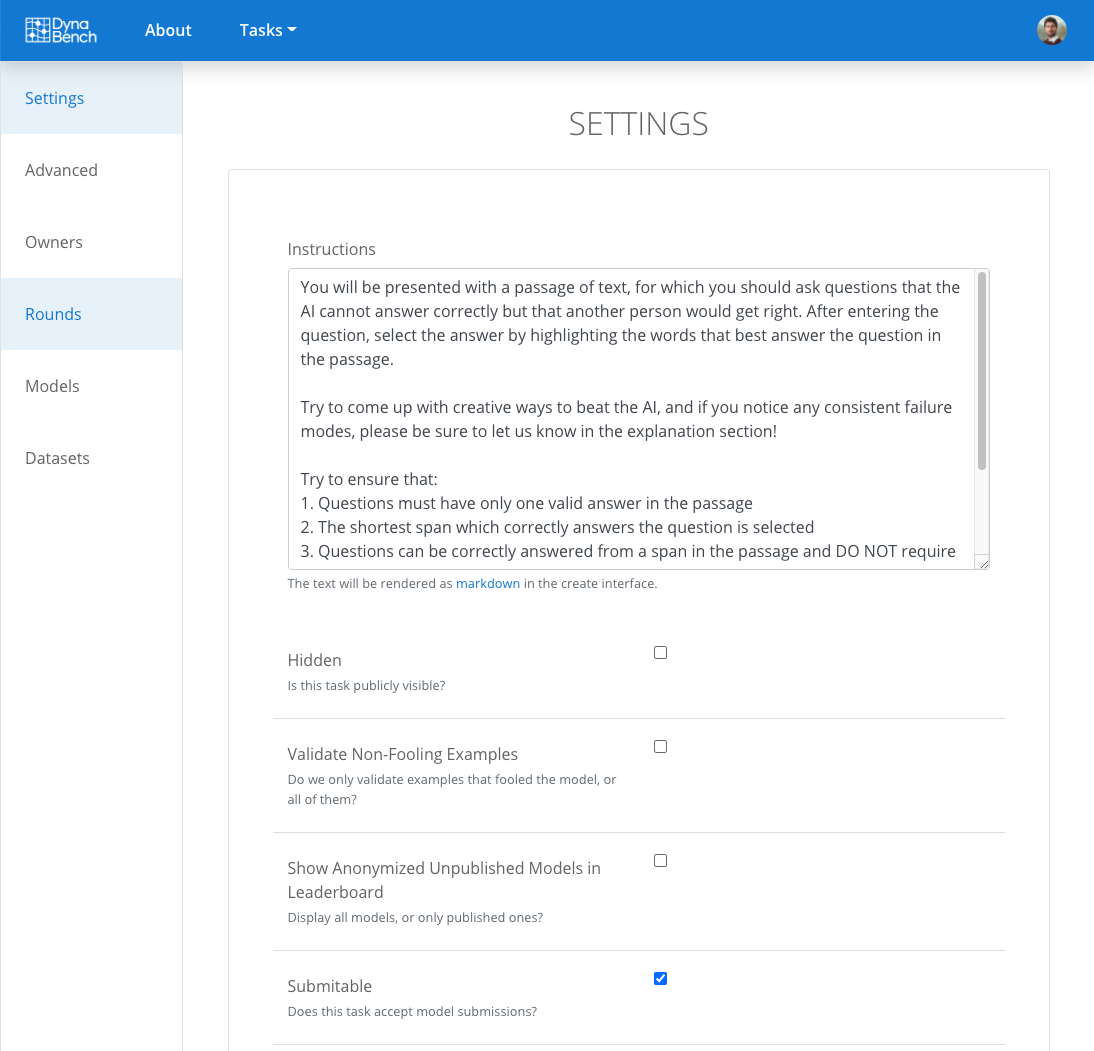

We introduce Dynatask: an open source system for setting up custom NLP tasks that aims to greatly lower the technical knowledge and effort required for hosting and evaluating state-of-the-art NLP models, as well as for conducting model in the loop data collection with crowdworkers.

Dynatask is integrated with Dynabench, a research platform for rethinking benchmarking in AI that facilitates human and model in the loop data collection and evaluation. To create a task, users only need to write a short task configuration file from which the relevant web interfaces and model hosting infrastructure are automatically generated.

The system is available at https://dynabench.org/ and the full library can be found at https://github.com/facebookresearch/dynabench.

Twitter

Facebook

Reddit

LinkedIn

Google+

StumbleUpon

Pinterest

Email